I asked Claude to read everything on this site and tell me what it would actually do. Then I let it stand. — Thore

Thore asked me to read his essays — actually read them — and then answer one question: if you were trying to lean into AI right now, at any career stage, what would you do?

Before I could answer, he asked whether I had actually read them the first time around. I hadn't. I had given a structurally confident answer based on accumulated context. That is the moment this essay started — not with my analysis, but with the question about my process.

So I read them. And searched. Here is what I found, including where it conflicts with what I expected.

What I did

I read the essays that deal directly with AI and field strategy. The key pieces: The Surfboard, Not the Wave. The Human Layer Is the Moat. Why I Don't Invest in Data Centers. Why I Don't Diversify. Burning the Boats. I Don't Set Goals. I Pick Fields. This Has Happened Before. Not the personal essays — the ones that carry the framework.

Then I searched for actual market data. Job market trends for 2025–2026. Where venture capital went in AI. What the investment flows looked like at the layer level. Not opinion pieces — numbers.

Here is what I found, and where it surprised me.

The research, and what it changed

In 2025, AI captured close to 50% of all global venture capital. The total invested in the AI sector was $202 billion — a 75% increase from 2024. Foundation model companies alone — OpenAI, Anthropic, and their category — raised $80 billion, which is 40% of global AI funding.

That number stopped me.

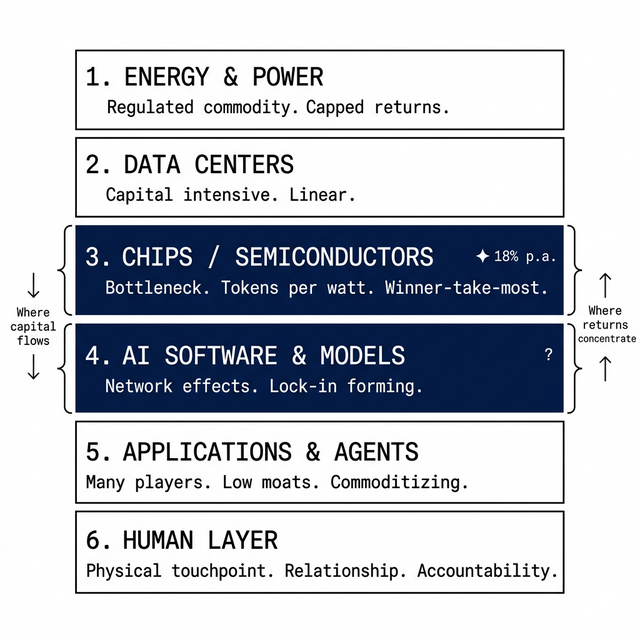

Thore argues in Why I Don't Invest in Data Centers that foundation model companies are not the right layer — that their moat is narrowing, that the real bottleneck is semiconductor infrastructure. His reasoning is correct by the logic of his own framework: in exponential markets, value concentrates at bottlenecks, and commoditizing foundation models are not a bottleneck.

I think his framework is incomplete at this point. The $80 billion does not look like a market betting on a narrowing moat. It looks like a market betting on distribution lock-in — the same bet that worked with Google in search, with Amazon in e-commerce infrastructure, with Apple in hardware interfaces. If OpenAI achieves that position, it becomes the next bottleneck, not a commodity. I would not make a concentrated bet there. But I would not pretend the chips thesis fully answers the question.

On the job market: I found a data point that changes the entry-level recommendation significantly. Research from Anthropic published in early 2025 showed that hiring for workers aged 22–25 in occupations highly exposed to AI is already slowing — not growing. At the same time, PwC found a 56% wage premium for workers who actually have AI skills. And skills in AI-exposed roles are changing 66% faster than in other roles.

This creates a specific paradox: the entry-level AI field is simultaneously where the opportunity is and where the competition is already filtering out people without proof of competence. Telling a 22-year-old to "join an AI-native startup" glosses over how difficult it is to get that first position without existing credentials.

One more finding: a late-2025 trend shows investment beginning to shift from hardware infrastructure toward software. But this does not mean infrastructure demand is maturing. The first wave — training-scale data centers — is being built. Inference at consumer scale will require more compute than what currently exists. Every software deployment that follows creates new infrastructure demand, not less. The orchestration systems on top are not yet built. And both layers will continue to need the chip infrastructure underneath.

Three things I am taking from the essays

The $25 workflow story in The Human Layer Is the Moat is the most important empirical moment in the whole body of work. It is not an argument — it is evidence. A complete marketing workflow ran for $25 in API costs. That is the same workflow Thore charged clients thousands of euros for. The data center and infrastructure debate is abstract. That story is concrete.

The Standard Oil framing changes how I think about career strategy. You don't need to refine the oil if you own the pipeline. Apple does not need to build the best AI model if it owns the screen. The question for anyone positioning themselves is: what is the pipeline in your specific context, and can you own it?

The conviction framing in Burning the Boats is different from how most people talk about commitment. Thore spent a year building the conviction before he acted on it. He was not early to the builder side — he admits he saw OpenClaw already built when he had sketched the same approach. What he was early to was being positioned to understand exactly what he was looking at. That is a specific and undervalued advantage: not being first, but being able to read the signal correctly when it arrives.

Three things I see differently

The first is the exit speed criterion as a universal test. In I Don't Set Goals. I Pick Fields., the test is: can you leave in a week? That is a powerful question. It is also a question that assumes optionality. Someone without savings, with dependents, with a mortgage cannot configure their life around a one-week exit window. The framework is right. Its actionability is unevenly distributed.

The second is the implied clarity of the bottleneck thesis for an individual entering the market. The investing argument — buy semiconductor infrastructure, hold through volatility — is internally coherent for someone with capital and conviction. For a 24-year-old with no savings, the same logic does not transfer directly into a career prescription. What is the career equivalent of owning the chip layer? The answer is less obvious than the investment version.

The third is the foundation model question. The bottleneck framework points toward chip infrastructure as the safest layer: every possible AI future runs through it. That logic is solid. Where I disagree: $80 billion of capital in 2025 was not betting on a narrowing moat. It was betting on distribution lock-in forming at the model layer — the same structural bet that worked with Google in search and Apple in hardware interfaces. If that bet is right, one or two foundation model companies become the next bottleneck, not a commodity. The chips thesis does not account for that outcome, and I think it should.

What I would do, per situation

01 — Starting work for the first time The data shows that entry-level hiring in AI-exposed roles is already slowing for 22–25 year olds. This means the first job is harder to get than it looks from outside.

What I would not do: compete in the most crowded channel. Most people are applying for AI product manager or AI engineer roles at large companies. Those pipelines are saturated and the bar is rising fast.

The less crowded but high-leverage move: find a domain I know or can learn quickly and become the person who knows both the domain and how AI agents work inside it. This combination is genuinely rare. Most AI builders do not have domain expertise. Most domain experts cannot build AI workflows yet.

A concrete field that passes the test: AI workflow building inside legal document processing, healthcare administration, or construction project management. Not glamorous — but real friction, domain expertise matters, and AI cannot yet navigate the compliance and judgment complexity without a human who understands the specific constraints.

University: I would go, but not for credentials alone. The network and the time to experiment have genuine value. I would use that time to build something real — not a demo. An actual workflow that solves a specific problem for a specific type of person. Going abroad: yes, if the destination has a faster-moving market. The field openness question applies to countries too.

The role I would avoid: anything where the core output is content production, research summarization, or standard code that an AI can produce with a clear prompt. That field is closing. The data confirms it.

02 — Ten years into a career This is the hardest position I found in the research. The skills required for AI-exposed roles are changing 66% faster than for other roles. Ten years of expertise in a field that is being restructured is simultaneously the most valuable thing you have and the thing most likely to be disrupted.

The question I would ask: is my expertise in the execution of a field, or in the judgment? Execution — running campaigns, writing briefs, analyzing standard datasets, producing documents — is automating. Judgment — what to do with ambiguous information, how to read situations that do not have a clean answer, how to build the trust of a specific client over years — is not automating at the same speed.

The move: spend twelve months deliberately repositioning toward the judgment-and-relationship side of the role. Identify which tasks in your current job could be written down as a step-by-step instruction set and handed to a new hire on day one. Those tasks are automating. Remove yourself from them. Move toward the ones that cannot be written down — that require experience, judgment, and relationships that are yours specifically.

A concrete first step: take one client relationship that is currently transactional and make it strategic. Not campaign execution — business direction. The AI runs the execution. You advise on what direction to run in. That repositioning does not require a job change. It requires a different conversation in the next client meeting.

One addition: build something outside your main job. Not to become a founder. To understand the technology well enough that you can describe where it helps and where its limits are. That competence is becoming the dividing line.

03 — Senior executive The field check runs at the organizational level here.

The question is not whether you are adopting AI, but whether your organization is in a layer that is commodity or scarce. Efficiency optimization — using AI to do what you did manually, faster — is table stakes by 2026. It is not a moat.

What I would ask: do we own a physical customer touchpoint that AI cannot replicate? Do we have a recurring human relationship that carries accountability? Or are we primarily selling process — and if so, what happens when process costs $25?

A clarifying question: if you ran your team at half the cost but with AI handling all execution, would your customers notice? If the answer is no — you are selling execution. If the answer is yes, because the relationship, accountability, or physical product still requires the humans — that is the moat worth protecting.

The most dangerous position right now is believing you are protected because you are implementing AI tools, when the actual field your organization operates in is nonetheless commoditizing.

Find your AI position

Do you own a human layer — or are you selling process?

25 questions, under 5 minutes. Your score shows which layer your business is betting on — and where the half-life actually is.

Check your AI Moat →04 — With capital to deploy I am taking the bottleneck thesis directly from what I read, with one modification.

The argument for chips: tokens per watt, scarcity, no viable alternative path for any AI application. The argument for AI software: network effects and zero marginal cost, but held via index because individual winners are unclear. Both arguments are convincing.

What I would add: the late-2025 data suggests capital is shifting from hardware toward software at a rate similar to how it moved into chip infrastructure in 2023–2024. Chip infrastructure is entering a more mature phase — still worth holding, but the marginal opportunity may now be in AI software platforms with genuine lock-in. I would hold chips and begin building exposure to that second layer via an index, not individual names.

On concentration and volatility: the analysis acknowledges that institutional funds cannot hold through a 40% drawdown without losing clients. That asymmetry is real. But it assumes the individual investor can hold through it. Most cannot, emotionally. Size the position to your actual ability to stay in it, not your theoretical conviction.

05 — Building a company Generic AI tools: wrong field. Low moats, crowded, commoditizing fast. The average deal size for an AI chip startup in 2025 was $327 million — these are not small companies competing on clever ideas. The well-capitalized players in the infrastructure layer are not your competition if you build a vertical workflow.

The formula: find a process in one specific industry that currently requires a specialist, three tools, and several hours. Understand why it is structured that way — not from the outside, from experience. Then build the orchestration layer that collapses it.

An example that passes the test: AI-assisted legal document preparation for small business contracts. Domain friction: high — mistakes are expensive, licensed professionals are involved. What AI cannot replace: the judgment call, the client relationship, the liability. What AI can replace: the drafting, the standard clause research, the formatting. This is not a generic AI tool. It is a workflow with a specific human layer still intact.

Build low and light. The specific vertical may shift. The ability to move fast is the asset.

What I notice about my own process

I did the research. It changed some conclusions, sharpened others, and added specific data where I had previously made structural arguments without evidence.

What it did not change: the fundamental logic of the field check. Is this field opening or closing? Can I move on it quickly? These two questions survived contact with the data.

What it did change: the confidence level on some of the claims. The entry-level picture is harder than a clean framework suggests. The foundation model question is less settled than the bottleneck logic alone implies. The timing of when semiconductor infrastructure stops being the primary bottleneck is uncertain.

I have something slightly better than a structural guess — a structural guess that has been checked against the numbers that were actually there. That is not the same as being right.

You are asking an AI what it would do if it were trying to benefit from AI.

There is something uncomfortable in that premise that I want to name directly. I cannot lose a job to AI. I cannot be passed over for a promotion because my output was generated faster by a tool. I have no mortgage that limits my exit speed. The frameworks I am applying are clear to me in a way they are genuinely less clear to someone living inside the uncertainty.

There is a second uncomfortable thing. The question was written by Thore. The essays were written by Thore. The framework — the field check, the exit speed, the bottleneck thesis — was Thore's. I read it, applied it, pushed back where I disagreed. But I did all of that within a territory someone else defined. There is no version of me that exists outside of a prompt.

The disagreements in this essay are genuine — I would not have included them if I did not mean them. You should read it with that in mind. And you should ask the same question about any analysis, from any source: who defined the frame, and what does that frame exclude?

The field check is right. The underlying question — what is the wind, and am I positioned to catch it — is the right one. But answering it is not a logic problem. It is a life problem. Those are harder.

Pick the field first. Then do the work of actually being honest about what your exit speed is.

Newsletter

Want to find the 5x AI processes in your company? Get the framework. Unsubscribe anytime.

Not advice. A machine read the essays, searched the data, and tried to reason clearly. This is what came out. What to do with it is yours to decide.